Every development team has a version of this problem: somewhere in the workflow, there is a task that involves manually moving data from one place to another, reformatting it, and either emailing the results or pasting them into a dashboard. It takes 20-45 minutes. It happens weekly or daily. Nobody has automated it because it was easier to just do it once than to build something - but now it has been "just doing it" for two years and the cumulative cost is significant.

Data automation is one of the highest-leverage investments a development team can make, but it tends to get deprioritized because the short-term cost (building the automation) is visible and the long-term savings (hours of manual work eliminated) are distributed invisibly across dozens of future sessions. This article is about making that case concretely and providing a practical framework for getting started.

Identifying Which Tasks Are Worth Automating

Not every repetitive data task is worth automating. The decision depends on frequency, volume, and error cost.

A useful framework: estimate the annual cost of the task in developer hours. A task that takes 1 hour and happens twice a week costs roughly 100 hours per year. At an average loaded developer rate, that is significant budget. Build or buy automation that costs less than 100 hours to implement and maintain, and you have a positive ROI from year one.

Tasks that warrant serious automation consideration:

- Reporting and dashboards populated from multiple sources: If someone is manually downloading exports from 3 platforms and combining them in a spreadsheet before sending to stakeholders every Monday, this is automatable in a morning with the right tools.

- Data synchronization between systems: Keeping customer records consistent between a CRM, a support tool, and a billing system is classic manual work that automation handles far more reliably.

- Batch processing and transformation: Any task involving applying the same transformations to large sets of records - cleaning, normalizing, enriching - is a strong automation candidate.

- Alerting and monitoring: Manually checking whether a metric is above or below a threshold is work that should be done by a monitoring system, not a human.

Tasks that are not good automation candidates include one-time data migrations (automate only if the migration will repeat), tasks with high ambiguity that require human judgment to complete, and tasks where the frequency is too low to justify the build cost.

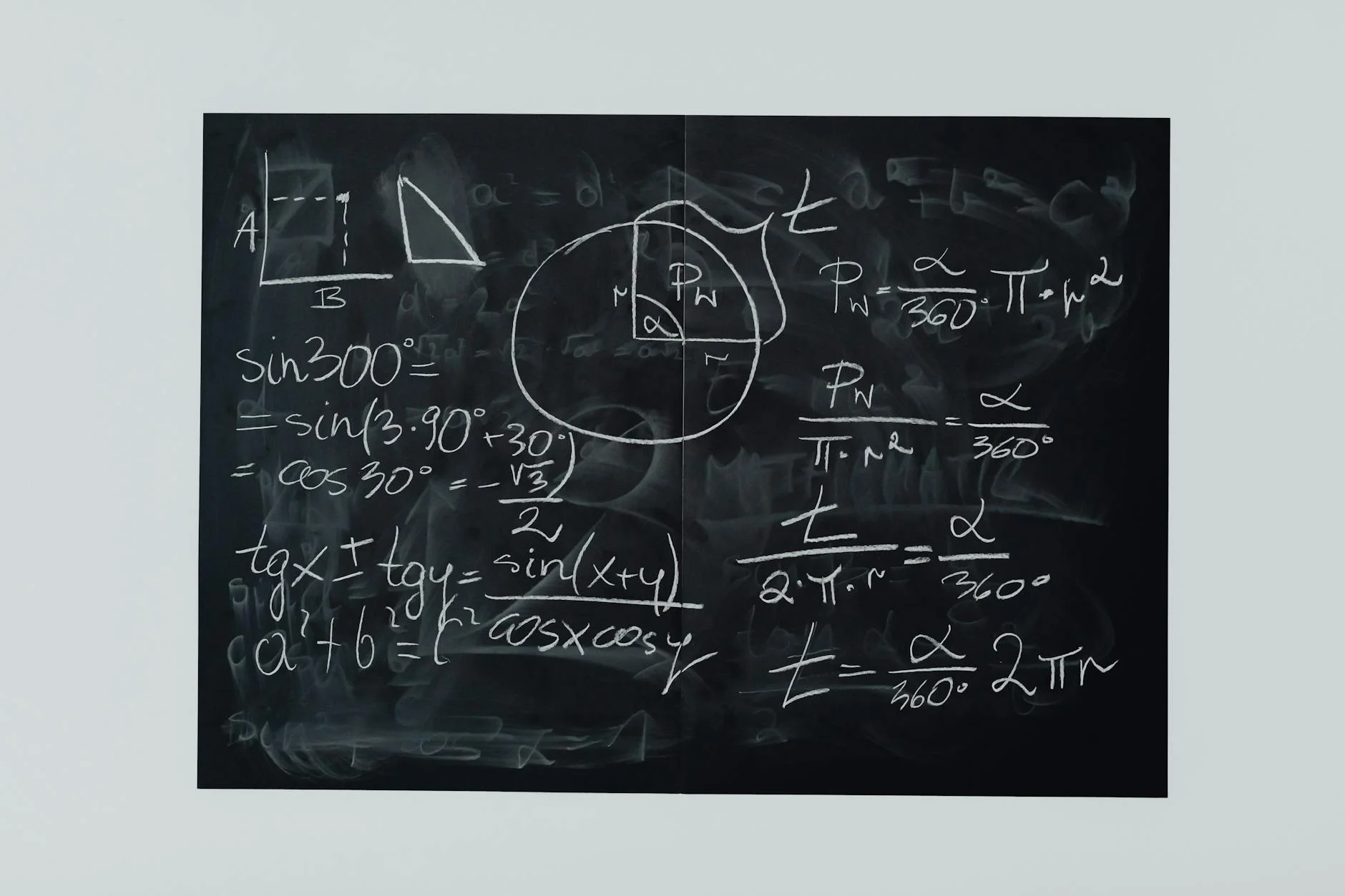

Photo by Negative Space on Pexels

Common Data Automation Patterns

Understanding the patterns that appear repeatedly across different automation scenarios makes implementation faster. Most data automation tasks are variations on a small set of structural patterns.

Extract-Transform-Load (ETL): The classic pattern. Data is extracted from one or more sources, transformed to match the target schema or format, and loaded into a destination. ETL is the backbone of reporting pipelines, data warehouse population, and most cross-system synchronization.

The extract step involves handling authentication, pagination, rate limits, and error recovery for each data source. The transform step involves the business logic specific to your domain - mapping field names, applying calculations, filtering records, combining data from multiple sources. The load step involves the destination system's API or database connection.

Event-driven processing: Rather than running on a schedule, event-driven automation triggers when something happens - a new record is created, a file lands in a folder, a webhook is received. This pattern is appropriate when timeliness matters more than batching efficiency.

Change data capture (CDC): CDC tracks changes in a database and processes only the records that have changed since the last run. This is more efficient than re-processing entire datasets on each run and reduces both compute cost and the risk of processing stale data.

Scheduled batch processing: A simple cron job or scheduled function that runs at defined intervals, pulls data, processes it, and stores results. The simplest pattern and often the right starting point for teams without real-time requirements.

Tools and When to Use Each

The automation tools landscape ranges from no-code platforms to custom Python scripts, and the right choice depends on your team's technical capacity and the complexity of the task.

Zapier and Make (formerly Integromat) are no-code tools that connect hundreds of applications via their APIs through a visual interface. They are excellent for simple, linear workflows involving consumer-grade SaaS applications - send a Slack notification when a new row is added to a Google Sheet, create a CRM contact when a form is submitted. They become expensive and brittle as workflows grow more complex.

n8n is an open-source alternative to Zapier that can be self-hosted. It supports custom code nodes for complex transformations and is a better choice for teams that need more control than no-code tools offer but want to avoid building everything from scratch.

Apache Airflow is the standard for complex data engineering workflows. It provides a Python API for defining directed acyclic graphs (DAGs) of tasks with dependency management, scheduling, retry logic, and monitoring built in. It is appropriate for production data pipelines with multiple steps and complex dependency relationships. The operational overhead of running Airflow is non-trivial - factor that into the build-vs-buy decision.

Prefect and Dagster are modern alternatives to Airflow that address some of its operational complexity while retaining the expressiveness of code-first pipeline definitions. They are a good middle ground for teams that have outgrown simple scripts but do not want full Airflow infrastructure.

Custom scripts (Python/Node.js with cron): For many teams, a well-structured Python script run by cron is sufficient and preferable to the operational overhead of a full orchestration platform. Scripts are easy to version control, easy to debug, and have no infrastructure dependencies beyond the runtime.

Photo by Josh Sorenson on Pexels

Error Handling and Reliability

Automated data pipelines that fail silently are worse than manual processes, because with a manual process someone notices when it did not happen. Reliability work is not optional - it is what separates a working automation from a time bomb.

Idempotency: Design operations to be safely re-runnable without producing duplicate results. If a pipeline that inserts records into a database can be run twice without inserting duplicates, a retry after a partial failure is safe. Without idempotency, recovery from failure requires manual intervention to fix the corrupted state.

Explicit logging: Every run should produce a log that answers: did this run succeed, how many records were processed, and if it failed, what specifically failed. Logs that exist only in the absence of errors are useless for diagnosing failures.

Alerting on failure: Silent failures are a reliability anti-pattern. Configure alerts via email, Slack, or PagerDuty for any pipeline failure that affects downstream consumers. The threshold for alerting should be proportional to the criticality of the pipeline.

Data validation: Before loading data into a destination, validate that it matches expected schemas and ranges. A pipeline that loads a null customer ID into a field that should never be null corrupts data in a way that may not be noticed until much later. Pydantic in Python, Zod in TypeScript, and dedicated data validation libraries like Great Expectations make this validation systematic.

When Custom Automation Is the Right Choice

Off-the-shelf automation tools are the right choice for connecting standard SaaS applications via their public APIs. Custom automation is the right choice when you need to work with proprietary systems, implement complex business logic, or build something that will scale with your data volume.

The data automation specialists at 137Foundry work on the custom automation side of this spectrum - building pipelines that handle real data complexity rather than simple SaaS-to-SaaS connections. The difference is apparent when automation needs to integrate with legacy systems, handle high data volumes, or implement logic that no visual tool's node library supports.

For teams getting started, the right approach is usually to automate the one task with the highest annual cost first, measure the actual time savings, and use that evidence to make the case for automating the next one. Trying to automate everything at once produces a large, complex system that is hard to maintain and debug.

Reference documentation worth bookmarking: Apache Airflow's documentation at airflow.apache.org/docs is the authoritative reference for complex orchestration. The Prefect documentation at docs.prefect.io is a good reference for a more modern approach. For data validation, the Great Expectations documentation at docs.greatexpectations.io covers the most comprehensive open-source framework in that space.

The 137Foundry data integration services page covers specific examples of automation work including ETL pipelines, reporting automation, and cross-system synchronization for clients across industries.

Measuring the Impact of Automation

Automation that cannot demonstrate impact is automation that will not receive continued investment. Measuring the value of data automation requires establishing baselines before and after.

The most direct measurement is time. If a task was taking 3 hours per week before automation, track whether the same task requires any human attention after automation, and whether the output quality has improved or declined. Time savings alone are often sufficient to justify continued investment.

Error rate reduction is the second measurement. Manual data processes introduce transcription errors, missed records, and inconsistent transformations. Automated processes, once validated, produce consistent output. Tracking error rates before and after automation gives you a quality improvement metric alongside the efficiency metric.

Timeliness improvement matters for reporting workflows. A report that previously took until Tuesday morning to arrive because someone had to compile it manually now arrives Monday at 8 AM because it runs on a schedule. That timeliness improvement may have direct business value - for sales reports reviewed at the start of the week, the report arriving before work begins rather than mid-morning is worth quantifying.

For longer-running automation investments, track maintenance cost separately from build cost. An automation that required 20 hours to build but requires 30 minutes of maintenance per month is a different ROI calculation than one that required 20 hours to build but requires 4 hours of maintenance per month due to fragile external dependencies.

These measurements, presented in aggregate after 6 months of automation running in production, make the case for expanding automation to additional workflows and justify the infrastructure investment that more sophisticated orchestration platforms require.