Webhooks are the backbone of modern application integrations. Payment processors, shipping platforms, CRMs, and nearly every SaaS tool uses them to push event data to your application in real time. The setup is conceptually simple: a remote system sends an HTTP POST to your endpoint when something happens, your server handles it and responds with 200, done.

Production is a different story. The remote system retries failed deliveries, sometimes dozens of times. Your endpoint might succeed at processing an event but crash before sending the acknowledgment, causing the provider to resend a completed transaction. A busy partner platform might flood your endpoint with events faster than your database can write them. Deployments, connection timeouts, and database locks create gaps in your event stream that surface only when a customer reports missing data days or weeks later.

Most guides to webhooks cover the happy path. This one covers the failure modes and the specific patterns that prevent those failures from producing duplicate records, missed transactions, or corrupted application state.

Why Webhook Failures Are More Common Than Teams Expect

The Retry Problem

Most webhook providers implement aggressive retry logic. When your endpoint returns anything outside 2xx, the provider queues a retry. Stripe retries failed webhook deliveries over the course of 72 hours, with exponential backoff between attempts. GitHub follows a similar pattern. This is sensible provider design, but it creates a specific trap: if your endpoint finishes processing an event and crashes before sending the 200 response, the provider sees a failure and retries. You then process the same event twice.

For payment events, this means fulfilling an order twice. For inventory updates, it can mean applying the same decrement multiple times. For anything with real financial or operational consequences, the retry is doing exactly what it should, but the absence of idempotency on your end turns a helpful reliability feature into a data integrity problem.

The Timeout Problem

Webhook providers set short delivery timeout windows, often between 5 and 30 seconds. If your endpoint performs synchronous work during that window - database queries, calls to third-party APIs, report generation, sending notifications - you risk timing out even when your application is functioning correctly. The provider marks the delivery failed and retries, even though your background logic may still be running.

Photo by Digital Buggu on Pexels

This is the source of a particularly confusing failure pattern: events that appear failed in the provider dashboard but have actually been processed, sometimes more than once.

The Volume Problem

Some systems deliver events in bursts. A CRM migration, a scheduled batch export, or a marketing platform sending campaign events can flood your endpoint with hundreds of requests in a short window. If each request waits for a synchronous database write, one slow write creates a queue of requests timing out upstream. The provider interprets the timeouts as failures and schedules retries, which arrive when your endpoint is already overwhelmed.

All three failure patterns share a common root: the endpoint is doing too much work before returning a response.

How to Build Endpoints That Survive These Failures

Respond Fast, Process Asynchronously

The most important structural change for reliable webhook processing is separating acknowledgment from processing. Your endpoint should validate the incoming request, store the raw event payload, and return an HTTP 202 Accepted immediately. A background worker reads from that storage and handles the business logic independently.

This decouples your response time from your processing time. Even if processing takes minutes, hits a transient database error, or requires retries of its own, the provider already received its acknowledgment and will not resend the event.

app.post('/webhooks/orders', async (req, res) => {

const isValid = verifySignature(

req.headers['x-webhook-signature'],

req.rawBody,

process.env.WEBHOOK_SECRET

);

if (!isValid) return res.status(401).end();

await db.query(

'INSERT INTO webhook_events (event_id, type, payload, received_at) VALUES ($1, $2, $3, NOW())',

[req.body.id, req.body.type, JSON.stringify(req.body)]

);

res.status(202).end(); // Respond before any processing

});A separate worker reads from webhook_events, processes each row, and marks it complete on success. The endpoint itself stays fast and stateless.

Implement Idempotency at the Processing Layer

Idempotency means applying an operation multiple times produces the same result as applying it once. For webhook processing, this means handling the same event twice should have the same effect as handling it once. The mechanism is simple: store processed event IDs and skip events you have already handled.

Most providers include a unique event ID in every payload. Store these IDs as events are processed and check for them before running business logic.

def handle_event(event_id, event_type, payload):

# Check for prior processing before doing anything

cursor.execute(

"SELECT 1 FROM processed_events WHERE event_id = %s",

(event_id,)

)

if cursor.fetchone():

return # Already processed - skip silently

# Run business logic

apply_business_logic(event_type, payload)

# Record completion after success, not before

cursor.execute(

"INSERT INTO processed_events (event_id, processed_at) VALUES (%s, NOW())",

(event_id,)

)

connection.commit()The ordering matters: record the event as processed after the business logic succeeds. Recording it first means a crash during processing leaves the event marked complete, and it will never run again.

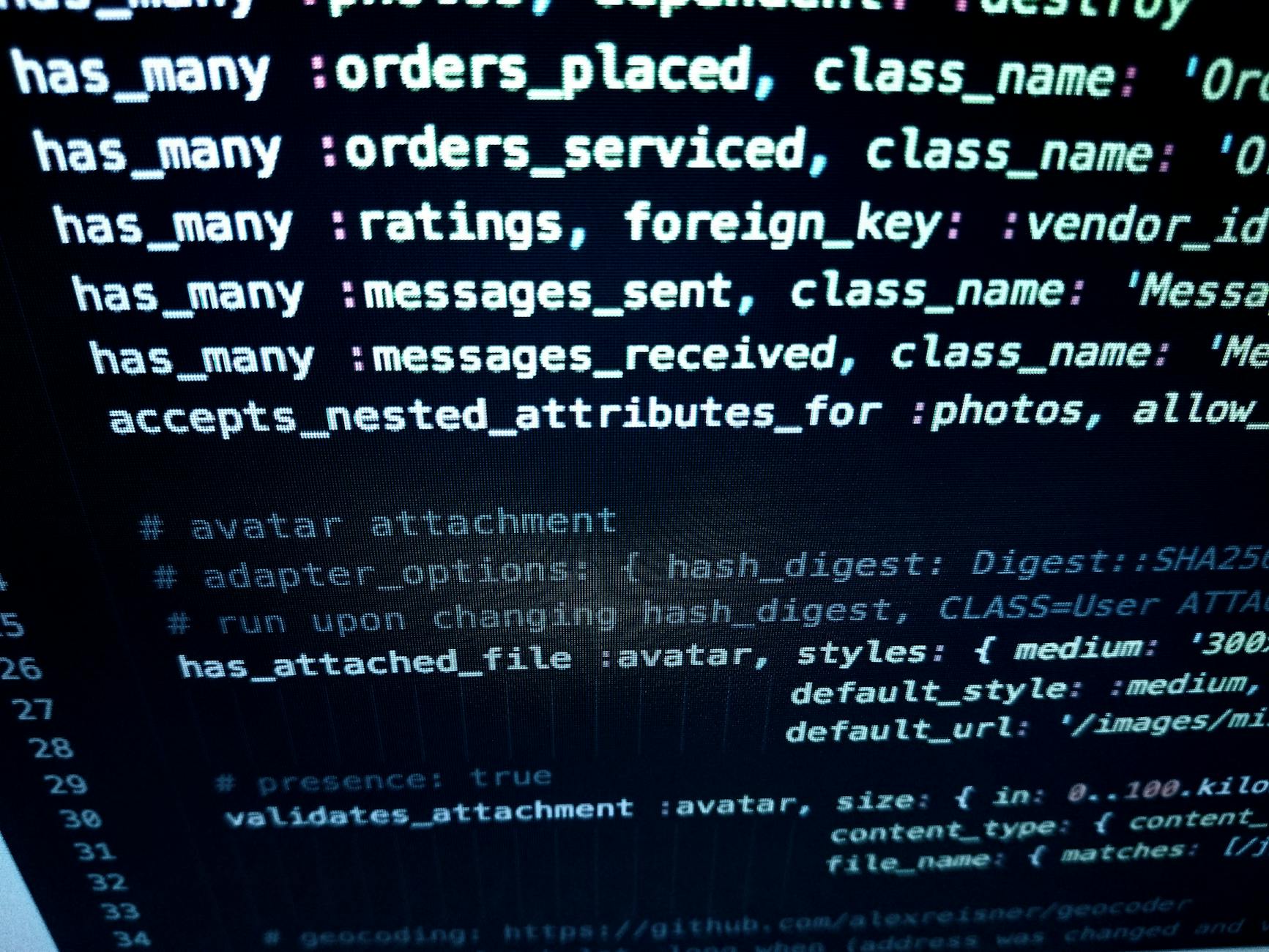

Photo by luis gomes on Pexels

Validate Signatures Before Doing Anything Else

Every reputable webhook provider signs payloads with a secret key shared during setup. Validate this signature before touching the payload. It confirms the request came from the legitimate sender and prevents your processing logic from running on malformed, replayed, or injected content. Stripe, GitHub, and most enterprise platforms use HMAC-SHA256 signatures. Validation adds a few milliseconds and eliminates an entire class of security and data integrity risk.

Advanced Reliability Patterns

Dead Letter Queues for Persistent Failures

Some events fail to process repeatedly for non-transient reasons: the referenced record does not exist in your database, the payload is missing required fields, or the event type is one your current code version does not handle. After a defined number of retry attempts, move these events to a dead letter queue rather than dropping them silently.

A dead letter queue is simply a separate storage location for events that exceeded their retry budget. It gives you a place to inspect failed events, diagnose root causes, and reprocess them once the underlying issue is resolved. Without it, persistent failures disappear and you may not know what data was missed.

Photo by Sergei Starostin on Pexels

Monitor Processing Lag, Not Just Error Rates

Error rates tell you when processing is failing. Lag tells you when processing is falling behind, which often precedes failures. Track the delta between when events arrive at your endpoint and when they are processed by your workers. Growing lag means your workers cannot keep pace with incoming volume, and you need to scale processing capacity before timeouts start cascading.

A lag metric requires only a timestamp comparison and a dashboard entry. It provides early warning that error rate monitoring simply cannot give you.

For teams building integrations where data completeness is critical, data integration specialists at 137Foundry design event-driven pipelines with reliability characteristics built in from the start. The backend team handles queue infrastructure, worker scaling, and observability configuration for systems where silent failures are not acceptable.

Handle Schema Evolution Without Throwing Hard Errors

Webhook providers update their event schemas over time. New fields appear, existing fields get renamed, nested objects get restructured. Write your processing code to tolerate fields it does not recognize and to log warnings when expected fields are missing, rather than throwing exceptions on schema mismatches.

Strict validation that throws on unexpected fields is the most common cause of webhook pipelines breaking silently after a provider releases a new API version. Tolerant parsing lets you absorb schema additions without a deployment.

A practical approach is to extract only the fields your code depends on and pass the rest through without inspection. Set up automated alerts when fields your logic relies on are missing from incoming events, so a breaking schema change surfaces as a monitored alert rather than a silent data gap. This combination of tolerant parsing and targeted field presence monitoring gives you flexibility without losing visibility into changes that affect your processing.

Related Resources

Building reliable webhook integrations requires the same foundational patterns regardless of which providers you use. Async processing, idempotency keys, and dead letter queues apply whether you are integrating Stripe, a shipping carrier API, a CRM, or a custom internal service.

137Foundry builds and maintains these integrations as part of their data integration services, including event-driven pipelines that incorporate AI-based transformation steps through their AI integration service.

For the technical specification on HTTP response status semantics, RFC 7231 is the authoritative reference. Stripe's webhook documentation is one of the best practical resources for understanding retry behavior, event ID structure, and signature validation, and its patterns apply broadly even outside of Stripe integrations.

Getting the Architecture Right the First Time

Reliable webhook integrations share three properties: they acknowledge events quickly without waiting for processing to complete, they use event IDs to skip duplicates, and they route persistent failures to a dead letter queue rather than dropping them. None of these patterns are individually complex, but all three require intentional decisions at the architecture stage.

The cost of retrofitting reliability into a webhook integration that has been running in production for two years - with real event history and live data flowing through it - is significantly higher than getting the design right before the first deployment. If your integrations process financial transactions, inventory state, or any data where duplicates or gaps carry real consequences, building for reliability from the start is the pragmatic choice.