API integrations tend to work perfectly in development and break at the worst possible time in production. The timing is not random. Development environments have stable connections, predictable data, and no concurrent load. Production environments have intermittent network issues, malformed data from external systems, rate limits that only matter at scale, and edge cases that only appear when real users generate real traffic.

Most integration failures are predictable. They are caused by a small set of well-understood problems that have well-understood solutions. The reason they keep happening is that teams under deadline pressure skip the reliability work because the happy path works - and then spend significantly more time debugging failures later than the reliability work would have cost upfront.

Authentication and Token Management

Authentication is the first place integrations fail, and it is frequently where the failure is hardest to diagnose because auth errors often surface as permission errors or unexpected API responses rather than explicit authentication failures.

API key management: API keys should be stored in environment variables, never hardcoded in source code or committed to version control. This is standard advice that teams still violate regularly. Tooling like git-secrets or built-in secret scanning in GitHub and GitLab can catch accidental key commits before they hit the remote.

The less obvious key management problem is key rotation. When an API key expires or is rotated by the provider, integrations fail immediately and without warning if there is no rotation procedure. Document where each key is used, set calendar reminders for key expirations, and prefer keys with no expiration when the provider allows it.

OAuth token refresh: OAuth integrations add complexity through token expiry. Access tokens typically expire in 1-24 hours. Integrations that do not handle token refresh gracefully will fail at a time determined by when the token was issued, not when the code was written - which means failures often appear days or weeks after deployment.

The correct implementation: before every API call, check whether the access token expires within the next few minutes. If it does, refresh it before proceeding. Handle refresh failures explicitly - if token refresh fails, the integration should surface a clear error rather than attempting the API call with an expired token and receiving a confusing 401 response.

Credential validation on startup: Integrations that validate their credentials during application initialization catch auth problems immediately on deployment rather than during the first user action that triggers the integration. A startup check that makes a lightweight API call to verify credentials is worth the few hundred milliseconds it adds to initialization.

Photo by Digital Buggu on Pexels

Rate Limiting and Retry Logic

External APIs have rate limits. At low request volumes, you will never encounter them. At higher volumes, you will encounter them constantly - and the behavior of your integration when it hits a rate limit determines whether users experience a brief delay or an error.

Understand the rate limit structure before you build: Different APIs implement rate limits differently. Some limit by requests per minute, some by requests per day, some by concurrent connections. Some return 429 responses with Retry-After headers that tell you how long to wait. Others return 429s with no timing information. Know what you are working with before you build the retry logic.

Exponential backoff with jitter: The standard retry pattern for rate-limited and transient failures is exponential backoff: wait 1 second before the first retry, 2 seconds before the second, 4 before the third, up to a maximum wait time. Add random jitter to the wait times to prevent thundering herd problems where multiple clients retry simultaneously after the same failure.

import time

import random

def retry_with_backoff(fn, max_attempts=5, base_delay=1.0):

for attempt in range(max_attempts):

try:

return fn()

except RateLimitError:

if attempt == max_attempts - 1:

raise

delay = base_delay * (2 ** attempt) + random.uniform(0, 1)

time.sleep(delay)Distinguish retryable from non-retryable errors: 429 (rate limited) and 503 (service unavailable) are retryable - the same request may succeed if retried after a delay. 400 (bad request) and 422 (validation error) are not retryable - retrying the same malformed request will produce the same error. 401 (unauthorized) may be retryable if the token needs to be refreshed first, but not if the credentials are incorrect. Your retry logic should handle each category differently.

Webhook Reliability

Webhooks - HTTP callbacks where a third-party system sends events to your endpoint - are a common integration pattern that introduces a different set of reliability challenges than polling-based integrations.

Respond immediately, process asynchronously: Webhook providers typically expect a 200 response within a few seconds. If your processing takes longer than that - or if processing fails - the provider may retry the webhook or mark it as failed. The correct pattern is to acknowledge the webhook immediately by storing the event payload in a queue, return 200, and process the event asynchronously. This decouples acknowledgment from processing and prevents the provider from timing out.

Idempotent event processing: Most webhook providers guarantee at-least-once delivery. This means your endpoint may receive the same event multiple times - after network failures, after your endpoint returns a non-200 status, or simply because the provider's delivery system retries on their schedule. Event processing must be idempotent: processing the same event twice should produce the same result as processing it once. A common implementation is to store processed event IDs and skip events that have already been processed.

Signature verification: Webhook payloads arrive as HTTP POST requests from an external server. Without signature verification, any server can send requests to your webhook endpoint and your application will process them. Most providers include a signature in the request headers using HMAC-SHA256 or a similar algorithm. Verify this signature before processing any webhook payload.

Retry handling and dead letter queues: When async webhook processing fails after retries, the event should land in a dead letter queue rather than being silently dropped. Dead letter queues capture events that could not be processed, allow for inspection and manual replay, and prevent data loss from transient processing failures.

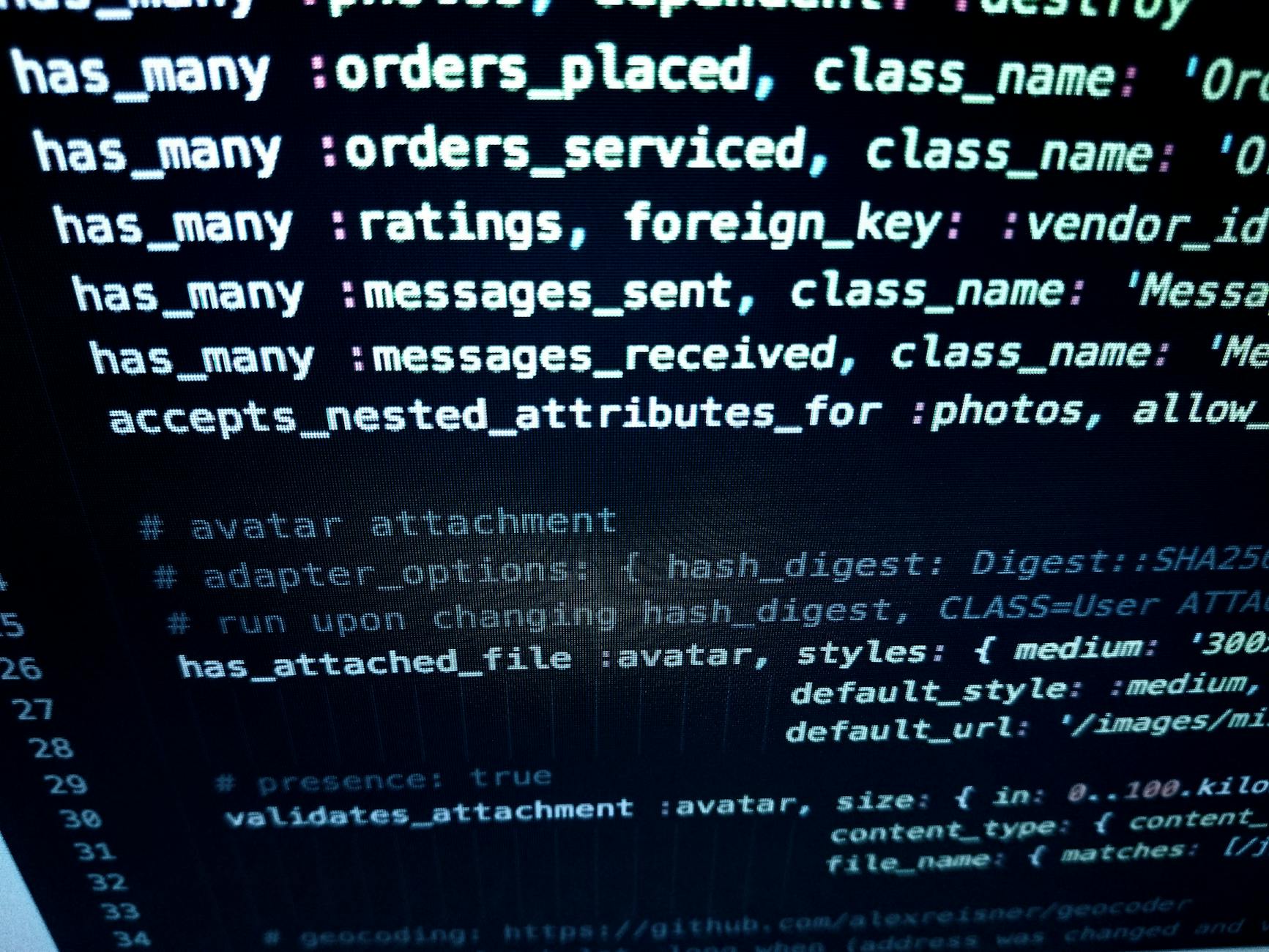

Photo by panumas nikhomkhai on Pexels

Monitoring and Alerting

An integration that is silently failing is harder to manage than one that fails noisily. The goal is to know about failures before users report them, which requires proactive monitoring rather than reactive debugging.

Log every integration call: Each API call should produce a log entry that includes the endpoint called, the response status code, the response time, and whether the call succeeded or failed. This creates an audit trail for debugging and a data source for monitoring.

Track error rates, not just error counts: A single API error is noise. An error rate that climbs above a threshold is a signal. Set up monitoring that tracks the percentage of failed API calls over a rolling window and alerts when that percentage exceeds a threshold you have defined as unacceptable. Tools like Datadog, New Relic, and the open-source Prometheus with Grafana all support this pattern.

Alert on degraded performance, not just failures: An API that normally responds in 200ms and is now taking 3 seconds has not failed, but it is causing user-facing slowdowns. Response time percentile monitoring (alert when p95 latency exceeds a threshold) catches degraded-but-not-failed integrations before they become complete failures.

Health check endpoints: For integrations your application depends on, implement a health check that verifies the integration is working and expose it via a monitoring endpoint. This makes integration status visible in your deployment pipeline and operations tooling.

Versioning and Change Management

External APIs change. Providers deprecate endpoints, modify response schemas, and add breaking changes. Without a change management strategy, an external API update can break your integration with no warning.

Pin to specific API versions when the provider supports it: Many APIs allow you to specify the version you are using in the request URL or a header. Use this when available. Unpinned integrations receive provider updates immediately, including breaking changes.

Monitor provider changelogs and deprecation notices: Subscribe to provider developer newsletters, monitor their status pages, and watch their changelogs. Providers typically give 90-180 days notice before removing deprecated functionality. Acting on that notice before the deadline is far cheaper than scrambling after an integration breaks.

Schema validation on incoming data: External APIs return data that does not always match their documented schema. Validate incoming response data against an expected schema before processing it. Validation failures should surface clearly - either as errors or as logged warnings for non-critical field changes.

Testing Integrations Effectively

Integration testing is frequently shortchanged because it is harder to set up than unit testing. Testing code that calls an external API requires either a real test environment with real credentials, a mock server that simulates the API, or a recorded fixture set that replays real API responses. Each approach has trade-offs.

Real test environments are the highest-fidelity option but introduce external dependencies that can make tests slow, flaky, or hard to run in CI. Mock servers - tools like WireMock or nock for Node.js - let you define expected requests and responses and run tests without external dependencies, but they can drift from the real API's behavior over time.

Contract testing, as implemented by tools like Pact, sits between these options. Consumers define what they expect from an API, and the test framework verifies that the actual provider meets those expectations. This approach works well for APIs your team controls but is harder to apply to third-party APIs.

Regardless of approach, integration tests should cover: authentication flows, rate limit handling, retry behavior on transient failures, webhook signature verification, and schema validation for API responses. These are the exact paths where production failures concentrate.

Working with a development team that has handled these integration patterns across many client systems reduces the ramp-up time significantly. At 137Foundry, we see the same reliability failures repeatedly across different client integrations and have built standard patterns for preventing them. The data integration services page has more context on the types of integration work we handle.

For deeper reference: the APIs You Won't Hate community at apisyouwonthate.com publishes practical guidance on API design and consumption. The Stripe engineering blog at stripe.com/blog/engineering frequently covers reliability patterns from a team that runs one of the highest-volume API systems in the world. For webhook-specific patterns, Svix's documentation at docs.svix.com is a thorough reference even if you do not use their platform.