Most development teams don't lose control of AI-generated code all at once. It happens gradually. A few autocomplete suggestions accepted without careful reading, a commit pushed with output nobody fully understood, and six weeks later you're debugging logic that nobody on the team actually wrote or fully owns.

The good news is that integrating tools like GitHub Copilot or Cursor into a team's Git workflow is not fundamentally different from adopting any other productivity tool. You add checkpoints, set shared norms, and build review habits that make the output trustworthy. The discipline is the same. The stakes are just higher when the code arrives pre-written.

Here is a practical approach that holds up in real codebases with real teams. It does not require a new tool or a new approval process. It requires applying the same discipline to AI output that good teams already apply to everything else they ship.

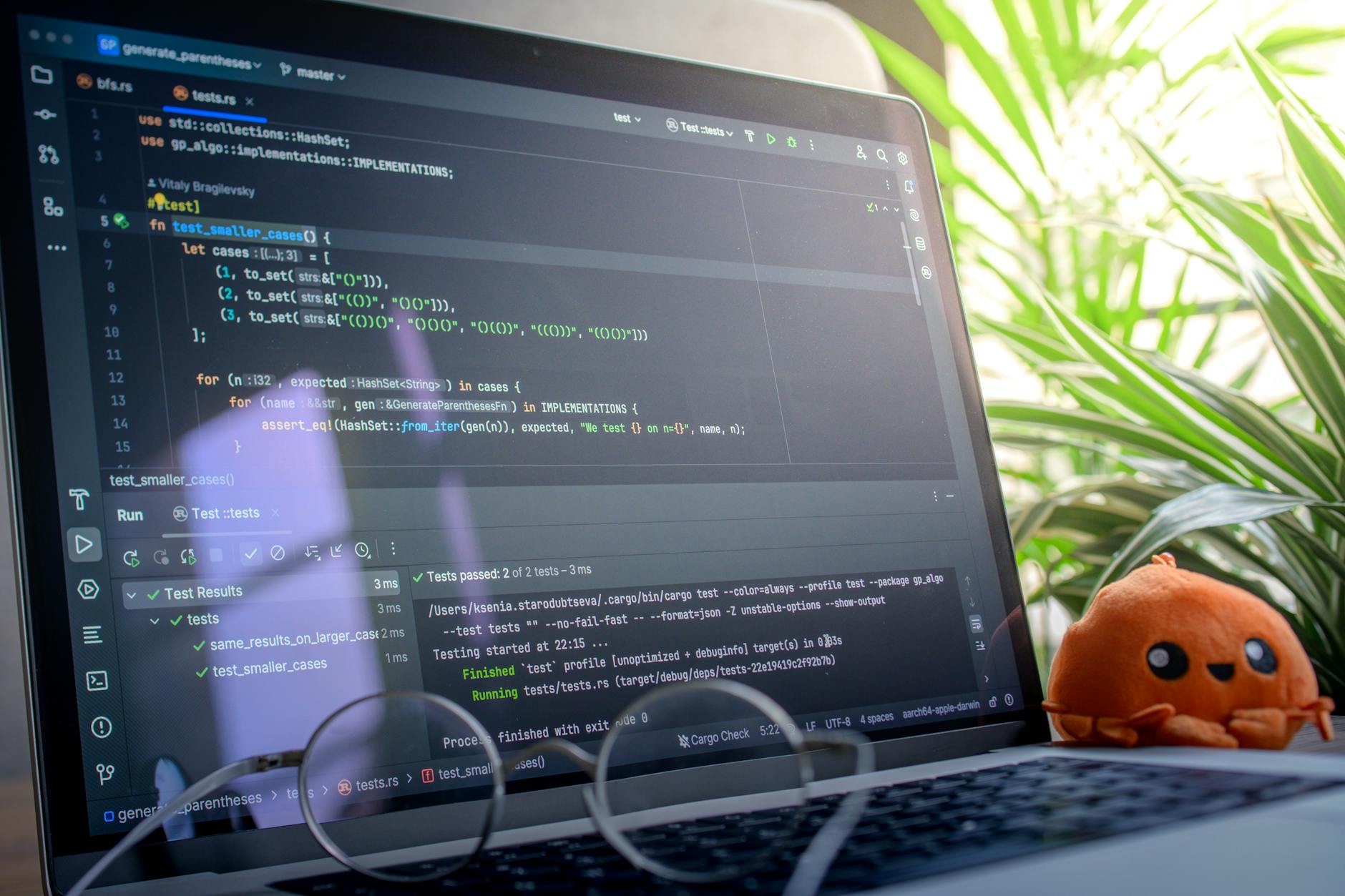

Photo by Daniil Komov on Pexels

Start With the Branch, Not the Merge

If your team doesn't already use short-lived feature branches for all new work, AI tools make that practice more urgent. When you're working with AI-generated code, isolation lets a reviewer see exactly what changed and why, without unrelated edits muddying the signal.

One useful convention is to prefix branches where significant AI output was used. Something like ai/feature-name or ai-assist/fix-auth-logic signals to reviewers that they should read with extra attention. It is not a judgment on quality. It is a flag that the review context is different.

This matters because reviewers naturally approach AI-generated code differently than hand-written code. With human code, you're mostly checking for logic errors and style violations. With AI output, you're also asking whether the code matches what was actually intended and whether it handles edge cases the AI had no way of knowing about. Different signal, different reading.

Prompt Discipline Before You Ever Hit Commit

The quality of AI-generated code depends heavily on the quality of the prompt. This sounds obvious, but in practice it means building prompt discipline into your normal day-to-day workflow, not just treating it as an afterthought.

Before accepting a suggestion, ask: did you give the tool enough context? That usually means including the file you're modifying, the relevant function signatures nearby, and a sentence or two about what the code needs to do. Vague prompts produce generic code. Specific prompts produce code that actually fits your codebase.

Consider maintaining a small team-level prompt library for repeated tasks. If you're using AI to generate tests, data transformations, or API clients regularly, standardized prompts reduce variance in output and make review faster because reviewers recognize the expected pattern. It sounds like extra overhead. In practice it saves review time on every PR that touches those areas.

Pre-Commit Hooks as a First Gate

A pre-commit hook runs automatically before a commit is finalized. It is one of the most underused checkpoints in a typical workflow and one of the most useful when AI tools are generating significant portions of code.

pre-commit is the standard framework for configuring these. At minimum, run a linter and a type checker. For Python teams that means flake8 or ruff alongside mypy. For JavaScript and TypeScript teams, ESLint and tsc. The goal is not to catch everything. The goal is to catch the most common AI mistakes before they travel upstream to a PR where they cost everyone more time to untangle.

AI tools write syntactically correct code reliably. They are less reliable about respecting your project's conventions, import patterns, or typing contracts. Pre-commit hooks enforce those conventions automatically, which means you're not relying on reviewers to catch style drift or obvious type errors in every PR.

Photo by Ludovic Delot on Pexels

How to Review AI-Generated Code Effectively

Code review for AI-assisted work carries a higher bar -- not because the tools are unreliable, but because they lack your team's tacit knowledge. They don't know about the edge case that caused last quarter's incident. They don't know about the performance constraint buried in a comment six files away.

Effective review here means asking three questions the AI genuinely could not answer: does this handle failure gracefully, does this match the actual data contract, and is there something simpler? Those three questions, asked consistently, catch the majority of real problems in AI-assisted PRs.

The "did this run" test is also worth enforcing as a norm. If the code looks right but nobody has run it locally, do that before approving. AI tools generate code that reads fluently and works in most cases. The cases where it does not tend to involve inputs the AI had no reason to anticipate.

"AI coding tools are most useful when engineers treat them as fast typists, not architects. The judgment call still belongs to the person reviewing the diff." - Dennis Traina, founder of 137Foundry

Commit Message Conventions for AI-Assisted Work

Conventional Commits gives you a structured format that works well alongside AI tools. When a commit contains substantial AI-generated logic, noting that in the commit body (not the subject line) gives future maintainers context without cluttering your git log.

The format would look something like this:

feat(auth): add token refresh logic

AI-assisted implementation. Reviewed and tested locally.

Covers standard expiry case; manual fallback for revoked tokens added separately.You do not need a policy requiring teams to flag every Copilot suggestion. That is impractical and creates friction without adding signal. But for substantial blocks of AI-generated logic, particularly anything touching business rules, external APIs, or shared state, marking it costs almost nothing and pays dividends in debugging months later.

When something breaks later, knowing a section of code was AI-generated tells the person debugging to look harder at edge cases and to verify the logic against the original requirements, not just against what the code says it does.

CI/CD as the Final Gate

Your branch protection rules and CI pipeline are the last automated checkpoint before code merges. GitHub Actions makes it straightforward to run tests, lint checks, and build validation on every pull request. For AI-heavy workflows, those checks become load-bearing.

Consider adding a required test coverage threshold. AI tools generate code that passes surface-level tests without effort. Requiring meaningful coverage -- covering branches, not just lines -- catches the gaps a quick review might miss. You do not need a strict percentage that slows everyone down; a floor that prevents obviously untested AI output from merging is enough.

A short PR checklist also helps. Before approving, each reviewer confirms: I ran this locally, I checked the boundary cases, and I understand what problem this code solves. That last item is the hardest to answer for AI-generated code, which is exactly why it belongs on the list.

Photo by Daniil Komov on Pexels

Assigning Reviews for AI-Assisted PRs

On teams with multiple reviewers, assign reviewers who know the relevant system well rather than whoever is next in rotation. AI tools produce code that looks plausible but may conflict with existing patterns in ways only a domain-aware reviewer would catch.

If your team combines AI tool output with automated pipelines -- an area where web and AI development firm 137Foundry works regularly with clients -- pairing a pipeline-aware reviewer with each PR that touches automation logic reduces the risk of subtle integration issues. Generic review catches obvious bugs. Domain review catches the ones that matter.

Keeping review turnaround tight also matters here. Long-lived AI-assisted branches accumulate drift. If a PR sits open for a week, the code under review may no longer reflect the context the engineer had in mind when they accepted the original suggestion.

What Not to Do

A few patterns reliably cause problems when teams integrate AI tools without guardrails. Knowing them in advance is easier than discovering them after a production incident.

Accepting suggestions without running them is the most common failure mode. Code that compiles is not code that works. Build a local test step into your routine before any AI-generated code enters a commit. This takes two minutes and catches the majority of obvious issues.

Treating AI-generated boilerplate as pre-verified is another. Boilerplate looks correct and usually is, but AI tools sometimes import packages that no longer exist or call APIs that changed between the version they trained on and the version you're running. Always verify that imports resolve and method signatures match your actual dependencies.

Finally, skipping review for "small" AI changes compounds over time. A one-line AI suggestion that modifies a boundary condition in a loop is not small. Every AI contribution needs human eyes on it before it merges, regardless of how minor it appears.

Putting It Together

The workflow that holds up in practice is not complicated: AI tools accelerate writing the first version of code, and human judgment determines whether that version ships. Branch hygiene, pre-commit gates, conventional commit messages, and CI enforcement create a pipeline that captures the speed advantage without trading away reliability.

The teams that get the most out of AI coding tools are not the ones that give the tools the most autonomy. They are the ones that build good checkpoints, enforce them consistently, and stick to them even when a PR feels routine. For more on how this plays out at the infrastructure level, the 137Foundry services page covers the approaches we use with development teams across different stack sizes.

Photo by Daniil Komov on Pexels